Autonomous driving reaches deep water; nvidia aims to build the foundation first

On the eve of the Beijing Auto Show, Wu Xinzhou, global vice president of NVIDIA, held media interviews in Beijing, systematically expounding NVIDIA's latest insights, technical layout, and future plans in the field of autonomous driving.

On the surface, this appears to be a technical communication revolving around chips, models, platforms, and products; but when viewed in the context of the current industry phase, what NVIDIA truly wants to convey is clearly not just about what products they have, but rather, as autonomous driving enters a deeper phase of engineering, how the industry should move forward.

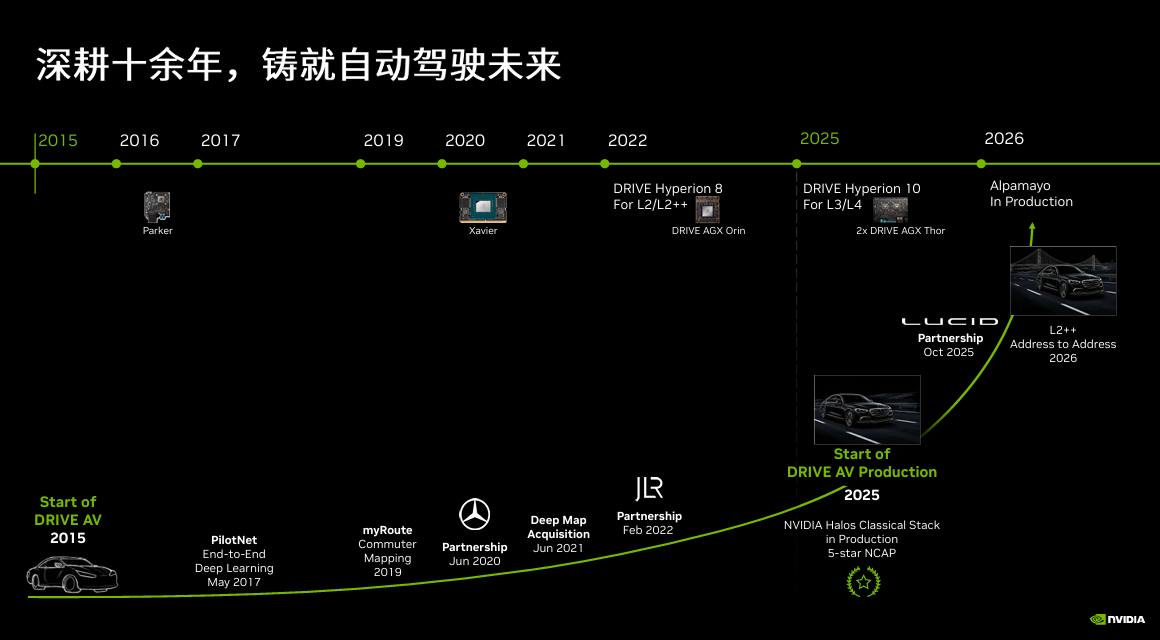

In recent years, the autonomous driving sector has been no stranger to hype or divergent approaches. Some have bet on vehicle-centric intelligence, others have doubled down on Robotaxi, while still others insist on first mastering L2+ and L3 before gradually advancing toward higher levels of autonomy. Beneath all the buzz, a more pressing question is emerging: as the technology moves beyond the proof-of-concept stage, what truly differentiates players is no longer any single capability, but rather who can integrate computing power, models, simulation, safety, and mass-production systems into a sustainable, industrialized pathway for steady progress.

It is precisely in this sense that when NVIDIA talks about autonomous driving today, it’s no longer just about a single chip or a domain controller solution—not even just about Level 4 itself—but rather about addressing a much bigger question: who will build the truly universal foundation.

Why Autonomous Driving Is Back in the Spotlight

Autonomous driving is not a new proposition, but it is being revalued.

In recent years, this industry has experienced too much back-and-forth: Is L2+ the ultimate solution? Does L3 hold any practical significance? How much longer will it take to reach L4? Will robotaxis achieve commercial viability before passenger vehicles?

Disagreements have always existed, but today a consensus is becoming clearer: autonomous driving is no longer just "a part of automotive intelligence," but is being re-understood within a broader physical AI framework.

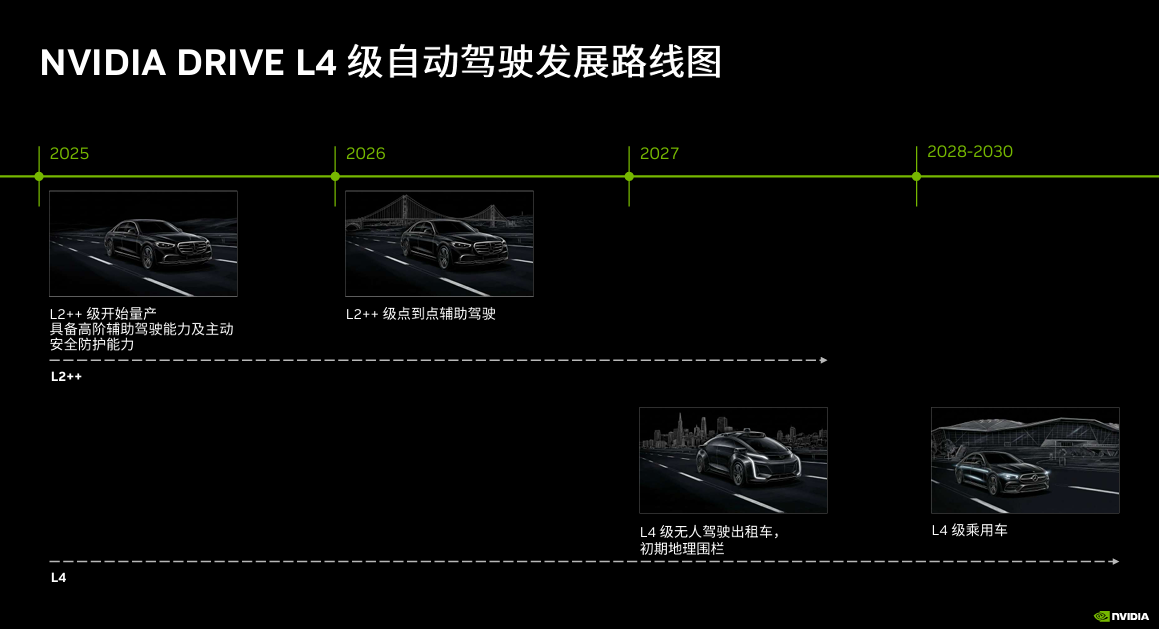

Wu Xinzhou’s judgment in this communication was very clear: “We feel that we can see the dawn of L4.” In his view, autonomous driving is approaching its own “ChatGPT moment.”

Image source: NVIDIA

This does not mean the industry has easily overcome all challenges, but rather that the technological paradigm and industry expectations have changed. In the past, autonomous driving relied more on modular engineering, rule stacking, and local optimization; today, as end-to-end approaches, world models, multimodal reasoning, and more efficient training and simulation capabilities continue to mature, autonomous driving is shifting from "function definition" to "system definition."

After the three paths diverge, Nvidia wants to control the "platform layer"

If we look at today's autonomous driving industry as a whole, we can broadly see three parallel paths currently being pursued.

One path is the technology company route. Representative players emphasize end-to-end capabilities and direct operations, aiming to achieve high-level autonomous driving first through Robotaxis. In April this year, Waymo opened its driverless ride-hailing service to all users in Miami and Orlando; Pony.ai is advancing driverless Robotaxi pilot operations in Dubai and plans to launch a public, fee-based service this year.

The second approach is the incremental path adopted by automakers. Rather than directly pursuing L4 operations, traditional OEMs prefer to progressively advance from L2+ to L3, accumulating user awareness, regulatory compliance experience, and engineering expertise within their mass-production systems. Mercedes-Benz is a typical representative. It is reported that MB.DRIVE ASSIST PRO has already entered the Chinese market by the end of 2025 and will expand to the U.S. market in 2026.

What NVIDIA truly aims to occupy is the third path: platform empowerment.

It does not operate a racing team itself, nor does it want to limit itself to being just a chip company. Wu Xinzhou said clearly in communication: "We hope all car manufacturers use our standardized platform, promote data sharing, and drive the entire industry toward L4." This statement highlights NVIDIA's core intention in autonomous driving: it is not competing for a specific model or project, but rather the position at the platform level.

The logic behind this is actually very realistic.

The real challenge of autonomous driving has never been just about getting a car to run in a certain limited scenario, but rather about enabling different car manufacturers, different models, and different regions to advance the system to mass production and continuous iteration with lower costs and higher efficiency.

Tech companies are competing for operational implementation, car manufacturers are competing for mass production schedules, and Nvidia is vying for the infrastructure that can enable both to move forward faster.

Official information shows that BYD, Geely, Isuzu, and Nissan have developed L4-ready vehicles based on NVIDIA DRIVE Hyperion; NVIDIA's fully integrated Robotaxi project in collaboration with Uber is scheduled to launch in Los Angeles and San Francisco in the first half of 2027, and expand to 28 markets by 2028.

This "platform empowerment" approach has been further concretized in the collaboration advances around the Beijing Auto Show. Chery has extended its collaboration with NVIDIA from assisted driving to in-vehicle AI and robotics; Desay SVI is advancing mass-produced intelligent driving solutions based on DRIVE Hyperion, DRIVE AGX Thor, and NVLink; Pony AI has also launched a new generation of autonomous driving domain controller, which is built on the DRIVE Hyperion platform. Rather than just showcasing partnerships, NVIDIA is proving a point: its role is evolving from a chip supplier to an organizer of "platform + ecosystem."

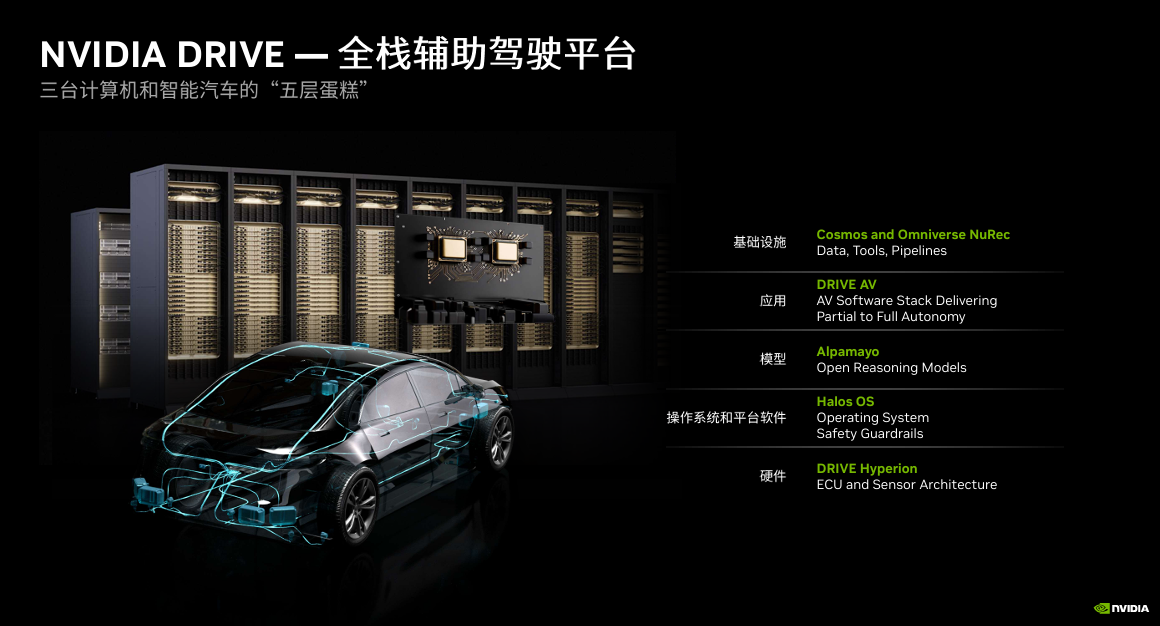

Behind the "Five-Layer Cake" Lies More Than Just Hardware Stacking

If we look at individual products, it's no stranger to see NVIDIA's layout in autonomous driving: it has computing platforms, operating systems, models, as well as simulation and cloud training capabilities. But what's truly noteworthy about this communication is not these capabilities themselves, but how they are being reorganized into a continuous industrial chain.

Wu Xinzhou used "three computers" and a "five-layer cake" on-site to explain this system.

Image source: NVIDIA

The so-called "three computing platforms" include the on-vehicle computing platform responsible for real-time inference, the cloud-based training platform used for model training, and the increasingly critical simulation computing platform.

Based on these three capabilities, NVIDIA builds a "five-layer cake": the bottom layer is a standardized hardware platform for high-level autonomous driving, followed by the safety and platform software layer, then the open models, simulation tools, and datasets, above which are application-layer solutions for original equipment manufacturers and partners, and the top layer is cloud-based training and simulation infrastructure.

The most important thing about this framework is not to add "more features", but to reorganize the scattered capabilities.

At the foundational level, NVIDIA aims first to define the hardware and sensor reference architecture for the L4 autonomous driving system. DRIVE Hyperion is defined as a complete, production-ready autonomous driving development platform and reference architecture, integrating a standardized sensor suite, a high-performance computing platform, and a robust software stack onto a single foundation. Its goal is not to do everything for automakers, but rather to significantly reduce the redundant costs automakers would otherwise incur by building their underlying systems from scratch.

At the next level up, the Halos safety system and platform software are tasked with "stabilizing the foundation." Its value lies not in novel concepts, but in standardizing the integration across different hardware, sensors, and configurations as much as possible. Halos OS is positioned within a unified safety architecture, providing a production-grade safety foundation for L4 autonomous driving based on DRIVE Hyperion.

Meanwhile, at the model and data layer, NVIDIA aims to do more than just release a model. Alpamayo is defined as an open product suite comprising AI models, a simulation framework, and a physics-based AI dataset, designed to help developers accelerate the development of safe, interpretable, and reasoning-capable autonomous driving systems. In other words, it delivers not an isolated algorithm, but an end-to-end development pipeline spanning training, simulation, and in-vehicle deployment.

More notably, the significance of simulation has been markedly elevated. As autonomous driving evolves from modular to end-to-end systems—operating on a “pixels-in, trajectories-out” logic—traditional module-by-module verification is becoming increasingly inadequate. Validating new models, in essence, means verifying their understanding of and ability to reconstruct the physical world. Consequently, simulation is no longer merely an auxiliary tool; it is becoming a core component driving the engineering advancement of high-level autonomous driving.

It is precisely here that NVIDIA has differentiated itself from many traditional suppliers. When talking about autonomous driving today, it’s no longer about “what kind of chip I have,” but rather about “whether I can turn the hardest, heaviest, and most expensive part of the autonomous driving development pipeline into a reusable foundation for the industry.”

More important than the schedule is its impact onL3、L4and the determination of ecological boundaries

Compared to that frequently referenced roadmap, the responses to several contentious issues raised during this communication actually reveal NVIDIA’s true assessment.

Image source: Nvidia

First, the relationship between L3 and L4. In recent periods, the industry has continuously discussed whether it is advisable to skip L3 and go directly to L4. During the on-site exchange, Gao Shi Auto asked Wu Xinzhou about this topic.

Wu Xinzhou did not accept this simple either-or logic. His judgment was closer to industrial reality: "At least in the short term, L3 is valuable, and L4 is not easy to achieve; the two are likely to coexist for a long time." The implication behind these words is clear: although L3 has controversies regarding responsibility boundaries and takeover time, it has already partially released the value of drivers' time; while L4 imposes higher requirements on redundancy, safety, remote support, and the overall operation system, and it will not be easily achieved in the short term.

Next is LiDAR. Wu Xinzhou has explicitly expressed his confidence in the vision-based approach; however, he also pointed out that for higher-level autonomous driving—particularly L3 and L4—LiDAR remains a critical safety redundancy. The crux here is not simply taking sides, but rather that, once the responsibility boundary is elevated, the system’s requirements for redundancy and verifiability are accordingly revalued. L2++, L3, and L4 are, by nature, not governed by the same sensor logic.

Next is the trend of car companies developing their own chips. In response to this increasingly evident trend, Wu Xinzhou quoted Jensen Huang: "We don't expect you to buy everything from us, but we also don't want you to buy nothing from us." This statement sounds light, but it carries a strong platform logic: car companies can certainly develop some things on their own, but as long as the industry still needs upstream capabilities like training, simulation, data, models, and security systems, NVIDIA can still find its place within them.

There is another detail worth noting. Wu Xinzhou pointed out that the automobile itself is a robot, and in the future, there may be no need for two entirely separate “brains.” AI designed for human-machine interaction and AI designed for driving execution will, in the long term, move toward stronger integration. This does not necessarily mean that the cabin and driving systems must use the same chip, but it does imply that automotive AI will more likely evolve into a unified system rather than two isolated functional islands.

From these observations, it’s clear that what’s truly bold about NVIDIA isn’t just its L4 timeline, but its attempt to seize control early over the most critical capabilities for advanced autonomous driving—platform, models, safety, simulation, and ecosystem collaboration. Once the industry truly transitions from “it works” to “it scales,” those who own the foundational platform often hold more leverage than those with standout individual solutions.

When it comes to autonomous driving today, what the industry lacks is not vision, but a clear path to realize that vision.

Tech companies are using operations to validate the commercial closed loop of high-level autonomous driving, while traditional automakers are adopting a step-by-step mass-production approach to accumulate real-world foundations. NVIDIA, meanwhile, aims to bridge these two paths by first establishing the underlying platform, model toolchain, and safety architecture. Its ambition extends beyond merely participating in autonomous driving—it seeks to secure the most fundamental and critical position before autonomous driving truly enters its scale-up phase.

From this perspective, what NVIDIA is really aiming to convey today about autonomous driving isn't "when L4 will arrive," but rather something else: as high-level autonomous driving begins to truly materialize, the industry will rely on a specific foundational methodology and platform ecosystem to move forward.

This might just be the most intriguing part of the conversation.

【Copyright and Disclaimer】The above information is collected and organized by PlastMatch. The copyright belongs to the original author. This article is reprinted for the purpose of providing more information, and it does not imply that PlastMatch endorses the views expressed in the article or guarantees its accuracy. If there are any errors in the source attribution or if your legitimate rights have been infringed, please contact us, and we will promptly correct or remove the content. If other media, websites, or individuals use the aforementioned content, they must clearly indicate the original source and origin of the work and assume legal responsibility on their own.

Most Popular

-

Continental Plans to Begin Sale of ContiTech in Early 2026

-

$4 Billion! Medtronic Makes Another Acquisition

-

BASF Delivers First Batch of Innovative Cathode Materials for Semi-Solid-State Batteries to Weilan New Energy

-

Why did a century-old european dental instrument giant relocate its manufacturing hub to china?

-

Profit and Revenue Growth Struggle to Conceal Debt Repayment Pressure; Success of Kingfa Sci & Tech's High-End Strategy Yet to Be Seen